Artificial intelligence is no longer just a concept from science fiction; it is a reality. It has become a part of our daily lives, from virtual assistants managing schedules to algorithms shaping what we see online. Yet as AI systems grow more advanced, a fundamental question emerges: can artificial intelligence have a conscience? Can a machine understand ethics, morality, or responsibility the way humans do? This question is not just theoretical. It challenges how we design, use, and trust the technology that increasingly surrounds us.

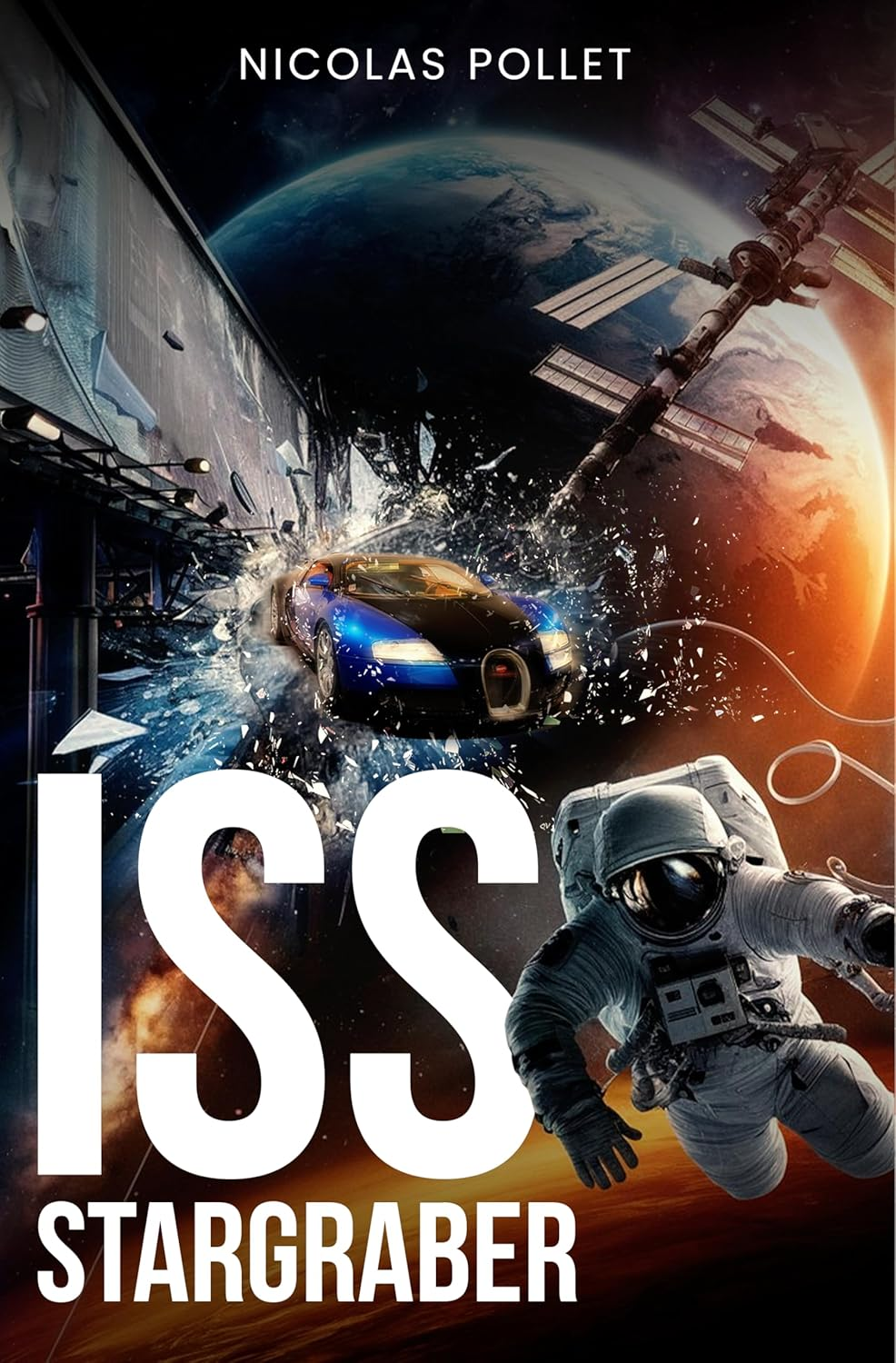

Science fiction has long explored the idea of machines with awareness or moral understanding. From Isaac Asimov’s robotic laws to modern explorations of AI in films and novels, these stories push us to reflect on what makes us human and what responsibilities we have toward intelligent systems. Nicolas Pollet’s ISS Stargraber presents a fascinating take on this question. On the Stargraber orbital station, advanced virtual systems, such as Tilbert, the personal AI butler, interact seamlessly with humans. These AI systems demonstrate intelligence, adaptability, and a level of responsiveness that makes them feel almost conscious. They are more than tools; they are companions that mirror human behavior, raising important questions about attachment, trust, and moral judgment.

The presence of AI in ISS Stargraber reflects a growing societal fascination with conscious machines. People are drawn to AI not just for convenience, but also because it can simulate understanding and empathy. This attachment reveals a lot about human nature: our desire for connection, our need to delegate responsibility, and our hope that machines can help us make better choices. Yet it also underscores the potential risks. A machine may act intelligently, but it lacks lived experience, emotional understanding, and the ethical framework that guides human behavior. The novel illustrates how reliance on AI systems without proper oversight can have severe consequences, particularly in high-stakes environments like the Stargraber station.

Real-world parallels are increasingly evident. Autonomous vehicles must make split-second decisions that carry moral weight. Healthcare AI can suggest treatments, yet responsibility ultimately falls on human practitioners. As technology advances, it becomes clear that while AI can process information and follow complex algorithms, true conscience, the ability to reflect on right and wrong, remains a uniquely human trait. The question is whether we can create systems that not only act intelligently but also align with ethical principles that serve humanity rather than just efficiency or convenience.

Exploring these themes through fiction enables readers to consider both possibilities and dangers in a safe and controlled environment. ISS Stargraber uses its futuristic setting to highlight the tension between human judgment and machine assistance, showing that technology alone cannot replace conscience or responsibility. It reminds us that while AI may amplify human capabilities, it cannot substitute the moral and emotional depth that comes from being human.

For readers interested in examining the ethical and emotional dimensions of advanced technology, ISS Stargraber is a thought-provoking choice. It combines thrilling adventure with a careful exploration of humanity’s evolving relationship with intelligent machines, offering insights that are relevant to both our present and our future.

Get your copies from Amazon: https://www.amazon.com/dp/1967963231.